Valve Hardware Day 2006 - Multithreaded Edition

by Jarred Walton on November 7, 2006 6:00 AM EST- Posted in

- Trade Shows

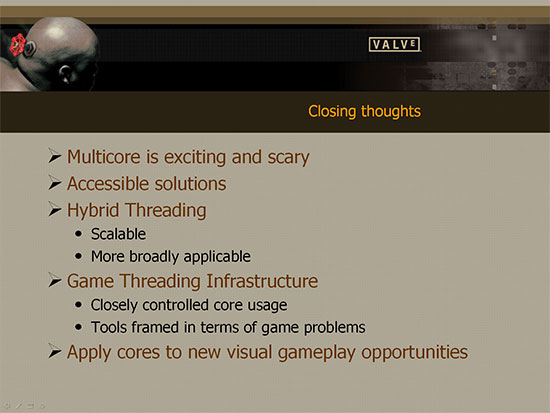

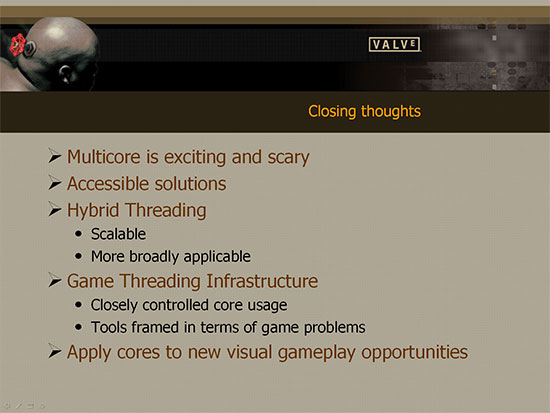

Closing Thoughts

At this point, we almost have more questions than we have answers. The good news is that it appears Valve and others will be able to make good use of multi-core architectures in the near future. How much those improvements will really affect the gameplay remains to be seen. In the short term, at least, it appears that most of the changes will be cosmetic in nature. After all, there are still a lot of single core processors out there, and you don't want to cut off a huge portion of your target market by requiring multi-core processors in order to properly experience a game. Valve is looking to offer equivalent results regardless of the number of cores for now, but the presentation will be different. Some effects will need to be scaled down, but the overall game should stay consistent. At some point, of course, we will likely see the transition made to requiring dual core or even multi-core processors in order to run the latest games.

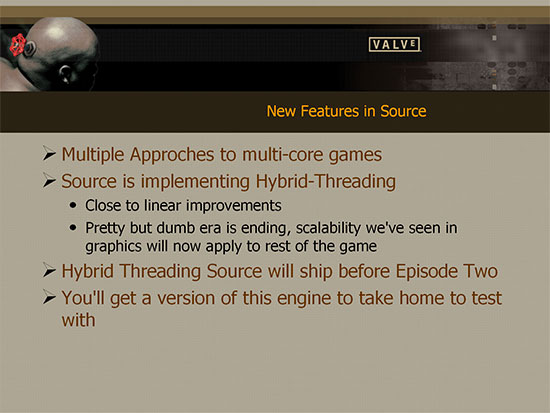

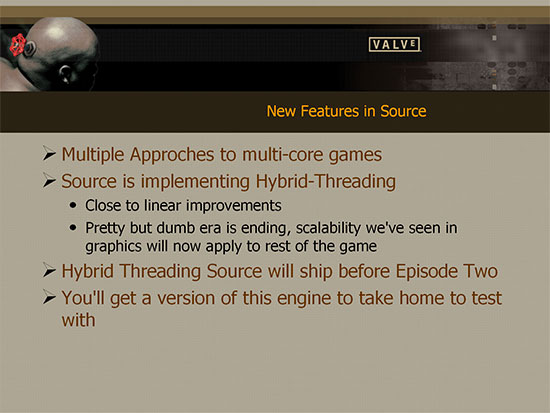

We had a lot of other questions for Valve Software, so we'll wrap up with some highlights from the discussions that followed their presentations. First, here's a quick summary of the new features that will be available in the Source engine in the Episode Two timeframe.

We mentioned earlier that Valve is looking for things to do with gaming worlds beyond simply improving the graphics: more interactivity, a better feeling of immersion, more believable artificial intelligence, etc. Gabe Newell stated that he felt the release of Kentsfield was a major inflection point in the world of computer hardware. Basically, the changes this will bring about are going to be far reaching and will stay with us for a long time. Gabe feels that the next major inflection point is going to be related to the post-GPU transition, although anyone's guess as to what exactly it will be and when it will occur will require a much better crystal ball than we have access to. If Valve Software is right, however, the work that they are doing right now to take advantage of multiple cores will dovetail nicely with future computing developments. As we mentioned earlier, Valve is now committed to buying all quad core (or better) systems for their own employees going forward.

In regards to quad core processing, one of the questions that was raised is how the various platform architectures will affect overall performance. Specifically, Kentsfield is simply two Core 2 Duo die placed within a single CPU package (similar to Smithfield and Presler relative to Pentium 4). In contrast, Athlon X2 and Core 2 Duo are two processor cores placed within a single die. There are quite a few options for getting quad core processing these days. Kentsfield offers four cores in one package, in the future we should see four cores in a single die, or you could go with a dual socket motherboard with each socket containing a dual core processor. On the extreme end of things, you could even have a four socket motherboard with each socket housing a single core processor. Valve admitted that they hadn't done any internal testing of four socket or even two socket platforms -- they are primarily interested in desktop systems that will see widespread use -- and much of their testing so far has been focused on dual core designs, with quad core being the new addition. Back to the question of CPU package design (two chips on a package vs. a single die) the current indication is that the performance impact of a shared package vs. a single die was "not enough to matter".

One area in which Valve has a real advantage over other game developers is in their delivery system. With Steam comes the ability to roll out significant engine updates; we saw Valve add HDR rendering to the Source engine last year, and their episodic approach to gaming allows them to iterate towards a completely new engine rather than trying to rewrite everything from scratch every several years. That means Valve is able to get major engine overhauls into the hands of the consumers more quickly than other gaming companies. Steam also gives them other interesting options, for example they talked about the potential to deliver games optimized specifically for quad core to those people who are running quad core systems. Because Steam knows what sort of hardware you are running, Valve can make sure in advance that people meet the recommended system requirements.

Perhaps one of the most interesting things about the multi-core Source engine update is that it should be applicable to previously released Source titles like the original Half-Life 2, Lost Coast, Episode One, Day of Defeat: Source, etc. The current goal is to get the technology released to consumers during the first half of 2007 -- sometime before Episode Two is released. Not all of the multi-core enhancements will make it into Episode Two or the earlier titles, but as time passes Source-based games should begin adding additional multi-core optimizations. It is still up to the individual licensees of the Source engine to determine which upgrades they want to use or not, but at least they will have the ability to add multithreading support.

With all of the work that has been done by Valve during the past year, what is their overall impression of the difficulty involved? Gabe put it this way: it was hard, painful work, but it's still a very important problem to solve. There are talented people that have been working on getting multithreading to work rather than on other stuff, so there has been a significant monetary investment. The performance they will gain is going to be useful however, as it should allow them to create games that other companies are currently unable to build. Even more surprising is how the threading framework is able to insulate other programmers from the complexities involved. These "leaf coders" (i.e. junior programmers) are still able to remain productive within the framework, without having to be aware of the low-level threading problems that are being addressed. One of these programmers for example was able to demonstrate new AI code running on the multithreaded platform, and it only took about three days of work to port the code from a single threaded design to the multithreading engine. That's not to say that there aren't additional bugs that need to be addressed (there are certain race conditions and timing issues that become a problem with multithreading that you simply don't see in single threaded code), but over time the programmers simply become more familiar with the new way of doing things.

Another area of discussion that brought up several questions was in regards to other hardware being supported. Specifically, there is now hardware like PhysX, as well as the potential for graphics cards to do general computations (including physics) instead of just pure graphics work. Support for these technologies is not out of the question according to Valve. The bigger issue is going to be adoption rate: they are not going to spend a lot of man-hours supporting something that less than 1% of the population is likely to own. If the hardware becomes popular enough, it will definitely be possible for Valve to take advantage of it.

As far as console hardware goes, the engine is already running on Xbox 360, with support for six simultaneous threads. The PC platform and Xbox 360 are apparently close enough that getting the software to run on both of them does not require a lot of extra effort. PS3 on the other hand.... The potential to support PS3 is definitely there, but it doesn't sound like Valve has devoted any serious effort into this platform as of yet. Given that the hardware isn't available for consumer purchase yet, that might make sense. The PS3 Cell processor does add some additional problems in terms of multithreading support. First, unlike Xbox 360 and PC processors, the processor cores available in Cell are not all equivalent. That means they will have to spend additional effort making sure that the software is aware of what cores can do what sort of tasks best (or at all as the case may be). Another problem that Cell creates is that there's not a coherent view of memory. Each core has its own dedicated high-speed local memory, so all of that has to be managed along with worrying about threading and execution capabilities. Basically, PS3/Cell takes the problems inherent with multithreading and adds a few more, so getting optimal utilization of the Cell processor is going to be even more difficult.

One final area that was of personal interest is Steam. Unfortunately, one of the biggest questions in regards to Steam is something Valve won't discuss; specifically, we'd really like to know how successful Steam has been as a distribution network. Valve won't reveal how many copies of Half-Life 2 (or any of their other titles) were sold on Steam. As the number of titles showing up has been increasing quite a bit, however, it's safe to say Steam will be with us for a while. This is perhaps something of a no-brainer, but the benefits Valve gets by selling a game via Steam rather than at retail are quite tangible. Valve did confirm that any purchases made through Steam are almost pure profit for them. Before you get upset with that revelation, though, consider: whom would you rather support, the game develpers or the retail channel, publishers, and distributors? There are plenty of people that will willingly pay more money for a title if they know the money will all get to the artists behind the work. Valve also indicated that a lot of the "indie" projects that have made their way over to Steam have become extremely successful compared to their previously lackluster retail sales -- and the creators see a much greater percentage of the gross sales vs. typical distribution (even after Valve gets its cut). So not only does Steam do away with scratched CDs, lost keys, and disc swapping, but it has also started to become a haven for niche products that might not otherwise be able to reach their target audience. Some people hate Steam, and it certainly isn't perfect, but many of us have been very pleased with the service and look forward to continuing to use it.

Some of us (author included) have been a bit pessimistic on the ability of game developers to properly support dual cores, let alone multi-core processors. We would all like for it to be easy to take advantage of additional computational power, but multithreading certainly isn't easy. After the first few "SMP optimized" games came and went, the concerns seemed to be validated. Quake 4 and Call of Duty 2 both were able to show performance improvements courtesy of dual core processors, but for the most part these performance gains only came at lower resolutions. After Valve's Hardware Day presentations, we have renewed hope that games will actually be able to become more interesting again, beyond simply improving visuals. The potential certainly appears to be there, so now all we need to see is some real game titles that actually make use of it. Hopefully, 2007 will be the year that dual core and multi-core gaming really makes a splash. Looking at the benchmark results, are four cores twice as exciting as two cores? Perhaps not yet for a lot of people, but in the future they very well could be! The performance offered definitely holds the potential to open up a lot of new doors.

As a closing comment, there was a lot of material presented, and in the interest of time and space we have not covered every single topic that was discussed. We did try to hit all the major highlights, and if you have any further questions, please join the comments section and we will do our best to respond.

At this point, we almost have more questions than we have answers. The good news is that it appears Valve and others will be able to make good use of multi-core architectures in the near future. How much those improvements will really affect the gameplay remains to be seen. In the short term, at least, it appears that most of the changes will be cosmetic in nature. After all, there are still a lot of single core processors out there, and you don't want to cut off a huge portion of your target market by requiring multi-core processors in order to properly experience a game. Valve is looking to offer equivalent results regardless of the number of cores for now, but the presentation will be different. Some effects will need to be scaled down, but the overall game should stay consistent. At some point, of course, we will likely see the transition made to requiring dual core or even multi-core processors in order to run the latest games.

We had a lot of other questions for Valve Software, so we'll wrap up with some highlights from the discussions that followed their presentations. First, here's a quick summary of the new features that will be available in the Source engine in the Episode Two timeframe.

We mentioned earlier that Valve is looking for things to do with gaming worlds beyond simply improving the graphics: more interactivity, a better feeling of immersion, more believable artificial intelligence, etc. Gabe Newell stated that he felt the release of Kentsfield was a major inflection point in the world of computer hardware. Basically, the changes this will bring about are going to be far reaching and will stay with us for a long time. Gabe feels that the next major inflection point is going to be related to the post-GPU transition, although anyone's guess as to what exactly it will be and when it will occur will require a much better crystal ball than we have access to. If Valve Software is right, however, the work that they are doing right now to take advantage of multiple cores will dovetail nicely with future computing developments. As we mentioned earlier, Valve is now committed to buying all quad core (or better) systems for their own employees going forward.

In regards to quad core processing, one of the questions that was raised is how the various platform architectures will affect overall performance. Specifically, Kentsfield is simply two Core 2 Duo die placed within a single CPU package (similar to Smithfield and Presler relative to Pentium 4). In contrast, Athlon X2 and Core 2 Duo are two processor cores placed within a single die. There are quite a few options for getting quad core processing these days. Kentsfield offers four cores in one package, in the future we should see four cores in a single die, or you could go with a dual socket motherboard with each socket containing a dual core processor. On the extreme end of things, you could even have a four socket motherboard with each socket housing a single core processor. Valve admitted that they hadn't done any internal testing of four socket or even two socket platforms -- they are primarily interested in desktop systems that will see widespread use -- and much of their testing so far has been focused on dual core designs, with quad core being the new addition. Back to the question of CPU package design (two chips on a package vs. a single die) the current indication is that the performance impact of a shared package vs. a single die was "not enough to matter".

One area in which Valve has a real advantage over other game developers is in their delivery system. With Steam comes the ability to roll out significant engine updates; we saw Valve add HDR rendering to the Source engine last year, and their episodic approach to gaming allows them to iterate towards a completely new engine rather than trying to rewrite everything from scratch every several years. That means Valve is able to get major engine overhauls into the hands of the consumers more quickly than other gaming companies. Steam also gives them other interesting options, for example they talked about the potential to deliver games optimized specifically for quad core to those people who are running quad core systems. Because Steam knows what sort of hardware you are running, Valve can make sure in advance that people meet the recommended system requirements.

Perhaps one of the most interesting things about the multi-core Source engine update is that it should be applicable to previously released Source titles like the original Half-Life 2, Lost Coast, Episode One, Day of Defeat: Source, etc. The current goal is to get the technology released to consumers during the first half of 2007 -- sometime before Episode Two is released. Not all of the multi-core enhancements will make it into Episode Two or the earlier titles, but as time passes Source-based games should begin adding additional multi-core optimizations. It is still up to the individual licensees of the Source engine to determine which upgrades they want to use or not, but at least they will have the ability to add multithreading support.

With all of the work that has been done by Valve during the past year, what is their overall impression of the difficulty involved? Gabe put it this way: it was hard, painful work, but it's still a very important problem to solve. There are talented people that have been working on getting multithreading to work rather than on other stuff, so there has been a significant monetary investment. The performance they will gain is going to be useful however, as it should allow them to create games that other companies are currently unable to build. Even more surprising is how the threading framework is able to insulate other programmers from the complexities involved. These "leaf coders" (i.e. junior programmers) are still able to remain productive within the framework, without having to be aware of the low-level threading problems that are being addressed. One of these programmers for example was able to demonstrate new AI code running on the multithreaded platform, and it only took about three days of work to port the code from a single threaded design to the multithreading engine. That's not to say that there aren't additional bugs that need to be addressed (there are certain race conditions and timing issues that become a problem with multithreading that you simply don't see in single threaded code), but over time the programmers simply become more familiar with the new way of doing things.

Another area of discussion that brought up several questions was in regards to other hardware being supported. Specifically, there is now hardware like PhysX, as well as the potential for graphics cards to do general computations (including physics) instead of just pure graphics work. Support for these technologies is not out of the question according to Valve. The bigger issue is going to be adoption rate: they are not going to spend a lot of man-hours supporting something that less than 1% of the population is likely to own. If the hardware becomes popular enough, it will definitely be possible for Valve to take advantage of it.

As far as console hardware goes, the engine is already running on Xbox 360, with support for six simultaneous threads. The PC platform and Xbox 360 are apparently close enough that getting the software to run on both of them does not require a lot of extra effort. PS3 on the other hand.... The potential to support PS3 is definitely there, but it doesn't sound like Valve has devoted any serious effort into this platform as of yet. Given that the hardware isn't available for consumer purchase yet, that might make sense. The PS3 Cell processor does add some additional problems in terms of multithreading support. First, unlike Xbox 360 and PC processors, the processor cores available in Cell are not all equivalent. That means they will have to spend additional effort making sure that the software is aware of what cores can do what sort of tasks best (or at all as the case may be). Another problem that Cell creates is that there's not a coherent view of memory. Each core has its own dedicated high-speed local memory, so all of that has to be managed along with worrying about threading and execution capabilities. Basically, PS3/Cell takes the problems inherent with multithreading and adds a few more, so getting optimal utilization of the Cell processor is going to be even more difficult.

One final area that was of personal interest is Steam. Unfortunately, one of the biggest questions in regards to Steam is something Valve won't discuss; specifically, we'd really like to know how successful Steam has been as a distribution network. Valve won't reveal how many copies of Half-Life 2 (or any of their other titles) were sold on Steam. As the number of titles showing up has been increasing quite a bit, however, it's safe to say Steam will be with us for a while. This is perhaps something of a no-brainer, but the benefits Valve gets by selling a game via Steam rather than at retail are quite tangible. Valve did confirm that any purchases made through Steam are almost pure profit for them. Before you get upset with that revelation, though, consider: whom would you rather support, the game develpers or the retail channel, publishers, and distributors? There are plenty of people that will willingly pay more money for a title if they know the money will all get to the artists behind the work. Valve also indicated that a lot of the "indie" projects that have made their way over to Steam have become extremely successful compared to their previously lackluster retail sales -- and the creators see a much greater percentage of the gross sales vs. typical distribution (even after Valve gets its cut). So not only does Steam do away with scratched CDs, lost keys, and disc swapping, but it has also started to become a haven for niche products that might not otherwise be able to reach their target audience. Some people hate Steam, and it certainly isn't perfect, but many of us have been very pleased with the service and look forward to continuing to use it.

Some of us (author included) have been a bit pessimistic on the ability of game developers to properly support dual cores, let alone multi-core processors. We would all like for it to be easy to take advantage of additional computational power, but multithreading certainly isn't easy. After the first few "SMP optimized" games came and went, the concerns seemed to be validated. Quake 4 and Call of Duty 2 both were able to show performance improvements courtesy of dual core processors, but for the most part these performance gains only came at lower resolutions. After Valve's Hardware Day presentations, we have renewed hope that games will actually be able to become more interesting again, beyond simply improving visuals. The potential certainly appears to be there, so now all we need to see is some real game titles that actually make use of it. Hopefully, 2007 will be the year that dual core and multi-core gaming really makes a splash. Looking at the benchmark results, are four cores twice as exciting as two cores? Perhaps not yet for a lot of people, but in the future they very well could be! The performance offered definitely holds the potential to open up a lot of new doors.

As a closing comment, there was a lot of material presented, and in the interest of time and space we have not covered every single topic that was discussed. We did try to hit all the major highlights, and if you have any further questions, please join the comments section and we will do our best to respond.

55 Comments

View All Comments

edfcmc - Thursday, November 23, 2006 - link

I always thought the dude with the valve in his eye was Gabe Newell. Now I know better.msva124 - Thursday, November 9, 2006 - link

Um.....you're kidding, right?

JarredWalton - Thursday, November 9, 2006 - link

Nope. That's what Valve said. Graphics and animations can be improved, but there are lots of other gameplay issues that have been pushed to the side in pursuit of better graphics. With cards like the GeForce 8800, they should be able to do just about anything they want on the graphics side of things, so now they just need to do more in other areas.msva124 - Thursday, November 9, 2006 - link

And 640K of RAM should be enough for anybody? Yeah right. There are certainly other things besides graphics that need to be tended to, but when even rendered cutscenes don't look convincing, it's extremely premature to say.

JarredWalton - Thursday, November 9, 2006 - link

So you would take further increases in graphics over anything else? Personally, I'm quite happy with what I see in many titles of the past 3 years. Doom 3, Far Cry, Quake 4, Battlefield 2, Half-Life 2... I can list many more. All of those look more than good enough to me. Could they be better? SURE! Do they need to be? Not really. I'd much rather have some additional improvements besides just prettier graphics, and that's what Valve was getting at.What happens if you manage to create a photo-realistic game, but the AI sucks, the physics sucks, and the way things actually move and interact with each other isn't at all convincing? Is photo-realism (which is basically the next step -- just look at Crysis screenshots and tell me that 8800 GTX isn't powerful enough) so important that we should ignore everything else? Heck, some games are even better because they *don't* try for realism. Psychonauts anyone? Or even Darwinia? Team Fortress 2 is going for a more cartoony and stylistic presentation, and it looks pretty damn entertaining.

The point is, ignoring most other areas and focusing on graphics is becoming a dead end for a lot of people. What games is the biggest money maker right now? World of WarCraft! A game that will play exceptionally well on anything the level of X800 Pro/GeForce 6800 GT or faster. There are 7 million people paying $15 per month that have basically said that compelling multiplayer environments are more important to them than graphics.

msva124 - Thursday, November 9, 2006 - link

Fine. You win.JarredWalton - Thursday, November 9, 2006 - link

Sorry if I was a bit too argumentative. Basically, the initial statement is still *Valve's* analysis. You can choose to agree or disagree, but I think it's pretty easy to agree that in general there are certainly other things that can be done besides just improving graphics. I don't think Valve intends to *not* improve graphics, though; just that it's not the only thing they need to worry about. Until we get next-gen games that make use of quad cores, though, the jury is out on whether or not "new gameplay" is going to be as compelling as better visuals.Cheers!

Jarred

cryptonomicon - Wednesday, November 8, 2006 - link

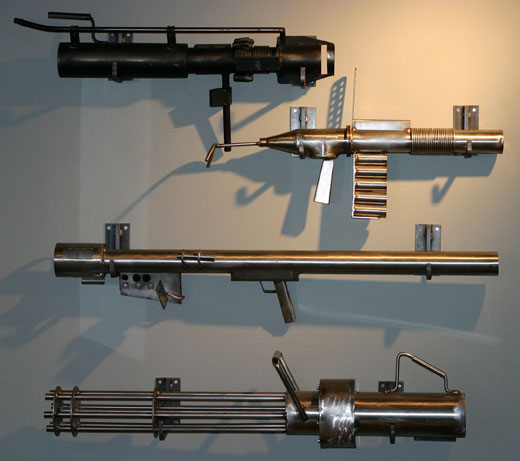

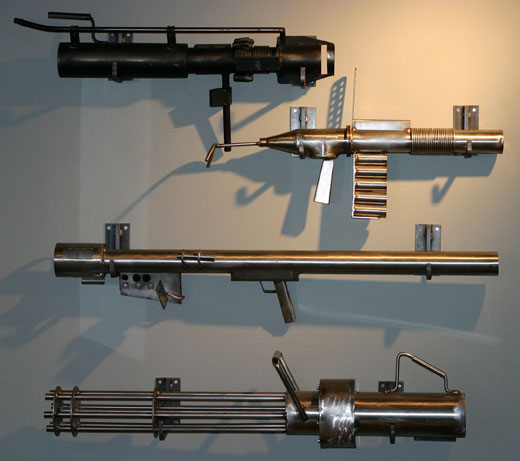

http://images.anandtech.com/reviews/tradeshows/200...">http://images.anandtech.com/reviews/tra...2006/val...So, what are those steel or aluminum models at the end? Are they the real world references for the Team fortress source weapon models? :D

yyrkoon - Wednesday, November 8, 2006 - link

I'm pretty sure 'it' in this sentence should be "it's", or "it is" (sorry, but it was bad enough to stop me when reading, thinking I mis-read the sentance somehow).

Good article, and it will be interresting to see who follows suite, and when. Hopefully this will become the latest fad in programming, and has me wanting to code my own services here at home for encoding video, or anything that takes more than a few minutes ;)

yyrkoon - Wednesday, November 8, 2006 - link

meh, sorry, that 'typo' is on the second to the last page, I guess about half way down :/