Intel Alder Lake DDR5 Memory Scaling Analysis With G.Skill Trident Z5

by Gavin Bonshor on December 23, 2021 9:00 AM ESTScaled Gaming Performance: High Resolution

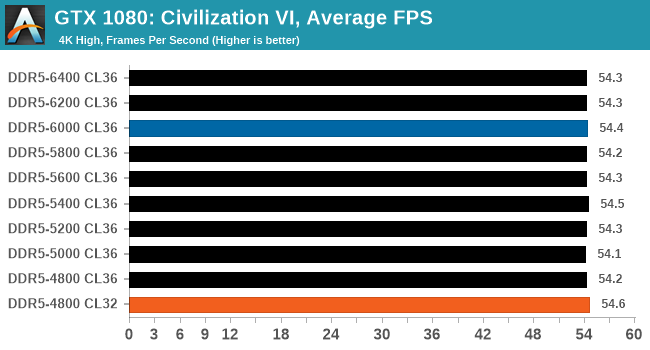

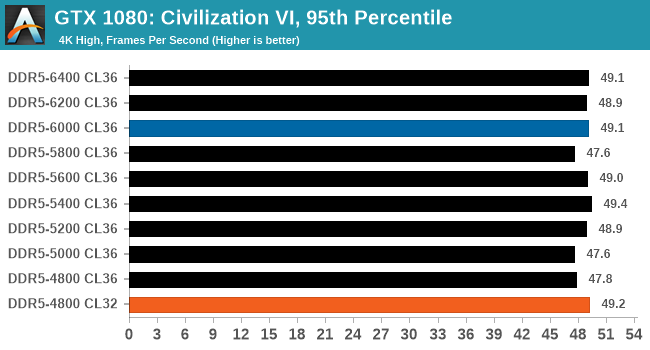

Civilization 6

Originally penned by Sid Meier and his team, the Civilization series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer underflow. Truth be told I never actually played the first version, but I have played every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, and it is a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Blue is XMP; Orange is JEDEC at Low CL

Performance in Civ VI shows there is very little benefit to be had by going from DDR5-4800 to DDR5-6400. The results also show that Civ VI actually benefits from lower latencies, with DDR5-4800 at CL32 outperforming all the other frequencies tested at CL36.

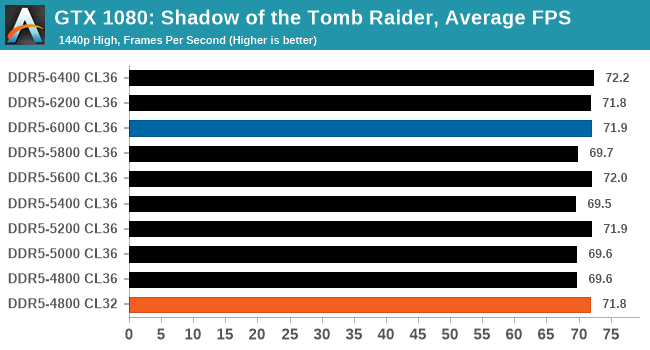

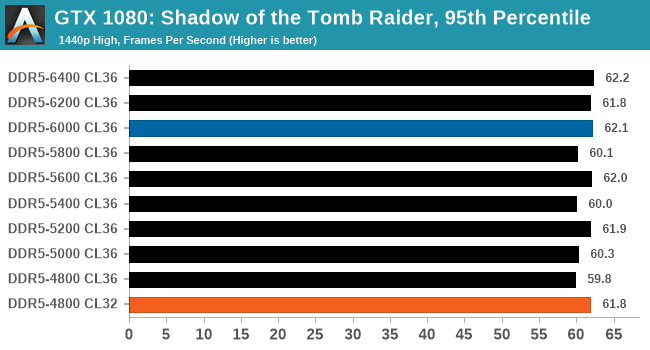

Shadow of the Tomb Raider (DX12)

The latest installment of the Tomb Raider franchise does less rising and lurks more in the shadows with Shadow of the Tomb Raider. As expected this action-adventure follows Lara Croft which is the main protagonist of the franchise as she muscles through the Mesoamerican and South American regions looking to stop a Mayan apocalyptic she herself unleashed. Shadow of the Tomb Raider is the direct sequel to the previous Rise of the Tomb Raider and was developed by Eidos Montreal and Crystal Dynamics and was published by Square Enix which hit shelves across multiple platforms in September 2018. This title effectively closes the Lara Croft Origins story and has received critical acclaims upon its release.

The integrated Shadow of the Tomb Raider benchmark is similar to that of the previous game Rise of the Tomb Raider, which we have used in our previous benchmarking suite. The newer Shadow of the Tomb Raider uses DirectX 11 and 12, with this particular title being touted as having one of the best implementations of DirectX 12 of any game released so far.

Blue is XMP; Orange is JEDEC at Low CL

Looking at our results in Shadow of the Tomb Raider, we did see some improvements in performance scaling from DDR5-4800 to DDR5-6400. The biggest improvement came when testing DDR4-4800 CL32, which performed similarly to DDR5-6000 CL36.

Strange Brigade (DX12)

Strange Brigade is based in 1903’s Egypt and follows a story which is very similar to that of the Mummy film franchise. This particular third-person shooter is developed by Rebellion Developments which is more widely known for games such as the Sniper Elite and Alien vs Predator series. The game follows the hunt for Seteki the Witch Queen who has arisen once again and the only ‘troop’ who can ultimately stop her. Gameplay is cooperative-centric with a wide variety of different levels and many puzzles which need solving by the British colonial Secret Service agents sent to put an end to her reign of barbaric and brutality.

The game supports both the DirectX 12 and Vulkan APIs and houses its own built-in benchmark which offers various options up for customization including textures, anti-aliasing, reflections, draw distance and even allows users to enable or disable motion blur, ambient occlusion and tessellation among others. AMD has boasted previously that Strange Brigade is part of its Vulkan API implementation offering scalability for AMD multi-graphics card configurations. For our testing, we use the DirectX 12 benchmark.

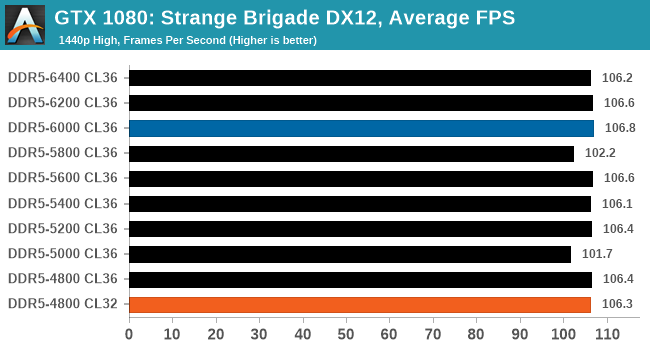

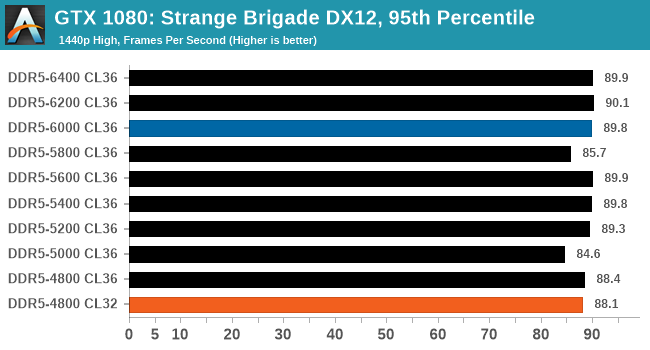

Blue is XMP; Orange is JEDEC at Low CL

Performance in Strange Brigade wasn't influenced by the frequency and latency of the G.Skill Trident Z5 DDR5 memory in terms of average frame rates. We do note however that 95th percentile performance does, for the most part, improve as we increased the memory frequency.

82 Comments

View All Comments

Slash3 - Thursday, December 23, 2021 - link

Process Lasso, not Project Lasso. ;)(This has happened in previous articles, too)

futrtrubl - Thursday, December 23, 2021 - link

Interesting that 5800 seems consistently better in these tests. I wonder if there is a timing/ratio related reason for that.evilspoons - Thursday, December 23, 2021 - link

Consistently worse, no? The times are longer and the frame rates are lower.meacupla - Thursday, December 23, 2021 - link

I would like to see IGPU performance with the various speeds.Now, I know the IGPU on desktop alder lake is poor, but AMD 6000 APUs are right around the corner, and I would like to see how well IGPU scales on DDR5 in general.

gagegfg - Thursday, December 23, 2021 - link

There is almost no difference. UHD 770 has very poor performance and does not scale as well with higher bandwidth as it does on AMD's IGPU.meacupla - Thursday, December 23, 2021 - link

well that's a shameWrs - Thursday, December 23, 2021 - link

Amd's iGPU hardly scales on DDR4 - can't really tell it apart from CPU scaling or even run to run variance.praeses - Thursday, December 23, 2021 - link

They typically see a 10% performance increase going from 3200-4000 with similar timings, the delta grows if you're comparing loose 3200 and tight 4000 timings.Samus - Thursday, December 23, 2021 - link

I suspect the memory bus is the limiting factor with AMD iGPU's as all of their recent memory architectures (going back to at least Polaris) were 128-bit+ DDR5 or HBM.The rare, NERFed examples paint a clearer picture: the only Polaris desktop GPU on 64-bit was the R7 435 (I think) and it had DDR3 at 2GHz. It was slower than most APU's at the time and remains one of those recent desktop cards that shouldn't have ever existed for desktop PC's. There just aren't many 64-bit cards, especially on DDR3, that return reasonable gains over integrated graphics; both are going to be so underperforming neither will play games reasonably well.

TheinsanegamerN - Tuesday, December 28, 2021 - link

I think tha tmostly comes down to AMD's memory controller then, considering those APUs are running on dual channel 128 bit memory busses.DDR4 just isnt that good for GPUs, easily demonstrated with the GT 1030 which, depsite its lack of power, was severely hamstrung on DDR4 VS 5.