Intel's Architecture Day 2018: The Future of Core, Intel GPUs, 10nm, and Hybrid x86

by Dr. Ian Cutress on December 12, 2018 9:00 AM EST- Posted in

- CPUs

- Memory

- Intel

- GPUs

- DRAM

- Architecture

- Microarchitecture

- Xe

The Next Generation Gen11 Graphics: Playable Games and Adaptive Sync!

Some of the first words out of the mouth of Raja Koduri about graphics is that Intel has a duty to its one billion customers with integrated graphics to give them something that is useful, and that it is time for Intel to provide graphics which people can actually play games on. Given his expertise on the matter, it shouldn’t sound too far-fetched: more people play games than ever before, and these users want to play no matter what their hardware. To that end, Raja stated that Gen11 graphics is the first step in a new graphics policy to provide the performance and features to let gamers play the most popular games, no matter what implementation.

Gen11: Intel’s first GT2 TFLOPS Graphics

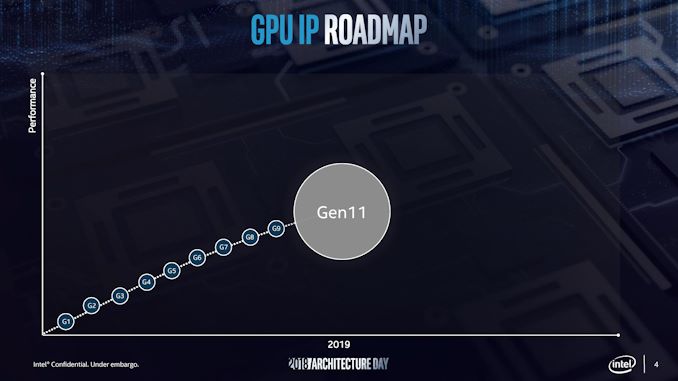

In 2015, Intel launched the Skylake processor with Gen9 integrated graphics. Rather than moving straight to Gen10 the next time around, we were given Gen 9.5 in both Kaby Lake and Coffee Lake, which supposedly draw features from what would have been Gen 10. Actually, the graphics for Intel’s failed 10nm Cannon Lake chip were meant to be called Gen10, however Intel never released a Cannon Lake processor with working integrated graphics, and because Gen11 goes above and beyond what Gen10 would have been, we’ve gone straight to Gen11. Make sense? Well Intel didn’t even bother to acknowledge Gen10 in its history graph:

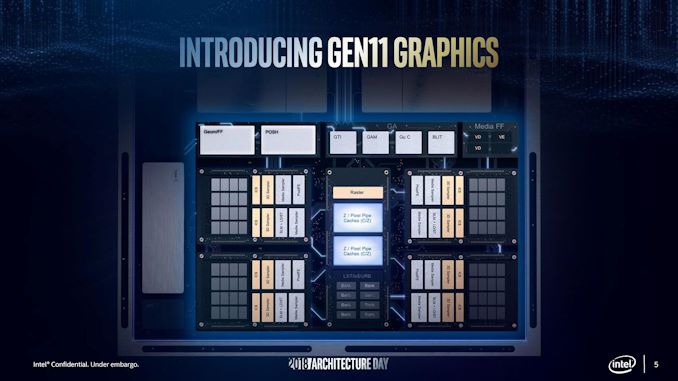

We will see Gen11 graphics being paired with Sunny Cove cores on 10nm sometime in 2019 according to the roadmaps. However rather than give a detailed architecture layout for the new product, we instead were given a rather high level diagram.

From here we can deduce a few things. We were told that this configuration is the GT2 config, which will have 64 execution units, up from 24 in Gen9.5. These 64 EUs are split into four slices, with each slice being made of two sub-slices of 8 EUs a piece. Each sub-slice will have an instruction cache and a 3D sampler, while the bigger slice gets two media samplers, a PixelFE, and additional load/store hardware. Intel lists Gen11 targeting efficiency, performance, advanced 3D and media capabilities, and a better gaming experience.

Intel didn’t go into too much detail regarding how the EUs are at higher performance, however the company did say that the FPU interfaces inside the EU are redesigned and it still has support for fast (2x) FP16 performance as seen in Gen9.5. Each EU will support seven threads as before, which means that the entire GT2 design will essentially have 512 concurrent pipelines. In order to help feed these pipes, Intel states that it has redesigned the memory interface, as well as increasing the L3 cache of the GPU to 3 MB, a 4x increase over Gen9.5, and it is now a separate block in the unslice section of the GPU.

Other features include tile-based rendering, which Intel stated the graphics hardware will be able to enable/disable on a render pass basis. This will make Intel the final member of the PC GPU vendor community to implement this, following NVIDIA in 2014 and AMD in 2017. While not a panacea to all performance woes, a good tile rendering setup plays well to the bandwidth limitations of an integrated GPU. Meanwhile Intel's lossless memory compression has also improved, with Intel listing a best case performance boost of 10% or a geometric mean boost of 4%. The GTI interface now supports 64 bytes per clock read and write to increase throughput, which works with the better memory interface.

Coarse Pixel Shading, Intel's implementation of multi-rate shading and similar in scope to NVIDIA’s own Variable Pixel Shading, is also supported. This allows the GPU to reduce the amount of total shading work required by shading some pixels on a less than 1:1 basis. Intel showed two demos for CPS, where pixel shading was reduced either as a function of object distance from camera (so you do less work when things are further away), or reduced as a function of how close the object is to the center of the screen, designed to help features like foveated rendering for VR. With a 2x2 pixel stencil applied – meaning only one pixel shading operation was done per block of 4 pixels – Intel stated a ~30% increase in frame rates in supported games. Unfortunately this needs to be applied on a game-by-game basis in order to prevent significant image quality losses, so the performance gains won't be immediate or universal.

For the media block, Intel says that the Gen11 design includes a ground up HEVC encoder design, with high quality encode and decode support. Intel cited the fact that its media fixed function units are already used in the datacenter for video processing, and home users can take advantage of the same hardware. Intel also stated that by using parallel decoders it can either support concurrent video streams or they can be combined to support a single large stream, and this scalable design will allow future hardware to push the peak resolutions up to 8K and beyond.

The highlight of the display engine is support for Adaptive Sync technologies. We were told that it was announced back at the launch of Skylake, but now it is finally ready to go into Intel’s integrated graphics. This goes in hand with HDR support due to its high-precision data path.

One thing in this presentation that Intel didn’t mention directly is that Gen11 graphics would appear to have Type-C video output support, potentially indicating that Intel has integrated the necessary mux into the chipset itself, removing another IC from the motherboard design.

148 Comments

View All Comments

nathanddrews - Wednesday, December 12, 2018 - link

I know the meme about gaming on Intel graphics, but if they implement Adaptive Sync *combined* with some sort of low framerate compensation, it would make gaming on Intel IGP much less hilarious. Can Intel license FreeSync without using AMD GPU inside? I know FreeSync worked on KLG, but that had an AMD GPU.RarG123 - Wednesday, December 12, 2018 - link

Like many of AMD's things, FS's an open standard and royalty free. Anyone can use it.Ryan Smith - Wednesday, December 12, 2018 - link

More specifically, Freesync 1 just AMD's implementation of DisplayPort Adaptive Sync. Intel has to build their own implementation in their display controller and driver stack, but past that all the signaling aspects to the monitor are standardized.Topweasel - Wednesday, December 12, 2018 - link

Ryan, I thought that was reversed, that AMD worked on adding Adaptive Sync into the specs and worked on making sure it's implementation matched what they were doing with Freesync.kpb321 - Wednesday, December 12, 2018 - link

IIRC it's a bit of both. Adaptive Sync was present in the eDP standard for things like laptop monitors or tablets as a power saving feature. AMD brought this to the desktop side of things to use for variable framerates in games and helped the standard bring it over too.edzieba - Wednesday, December 12, 2018 - link

'Adaptive Sync' is effectively the eDP Panel Self Refresh ported over to the full DP spec.drunkenmaster - Wednesday, December 12, 2018 - link

Freesync utilises adaptive sync. Adaptive Sync is the technology on the screen side, a screen must support adaptive sync to be used by Freesync. Freesync is just the AMD side of it. If a adaptive sync capable screen is detected you can turn on freesync in drivers. Adaptive sync was a standard written up and proposed by AMD and given to I forget who it is now, Displayport group direct or to Vesa. They accepted it and implemented it pretty quickly but as with all things standards take a long time for get integrated into the next cycle or two of products.Anyone can use Adaptive sync panels, no one but AMD can use freesync as it's something specific to their hardware and drivers. intel will produce their own specific driver/implementation and just connected to adaptive sync panels in the same way.

porcupineLTD - Wednesday, December 12, 2018 - link

So Intel is going straight to chiplets on interposer, it will be interesting to see if AMD adopts this with Zen 3 or waits until Zen 4. Anyway its nice to see competition doing its job.Alexvrb - Wednesday, December 12, 2018 - link

We don't know yet exactly how much logic Intel is moving to the interposer. It looks awesome for mobile form factors! I think they will face some challenges to bring it to high-TDP desktop solutions, though.ajc9988 - Wednesday, December 12, 2018 - link

http://www.eecg.toronto.edu/~enright/micro14-inter... http://www.eecg.toronto.edu/~enright/Kannan_MICRO4... https://youtu.be/G3kGSbWFig4 https://seal.ece.ucsb.edu/sites/seal.ece.ucsb.edu/... https://www.youtube.com/watch?v=d3RVwLa3EmM&t=...